In other recent links:

-

In the mid-19th century, William Rowan Hamilton tried to commercialize his work on geometric symmetry by marketing a puzzle / game involving finding polygons through all the vertices of a dodecahedron (\(\mathbb{M}\)). It was not a commercial success, and only a handful of copies of his game are known to survive, but this work is the reason we now call cycles through all vertices of a graph Hamiltonian cycles. See the Wikipedia article on Hamilton’s icosian game, newly promoted to Good Article.

-

Lance Fortnow sorts out the difference between complexity classes \(\mathsf{E}\) and \(\mathsf{EXP}\) (\(\mathbb{M}\)). The one with the shorter name is the more restrictive: single-exponential time in the input size. The other one allows times that are exponential in any polynomial of the input size. This leads to a quick proof that for instance \(\mathsf{E}\ne\mathsf{PSPACE}\), because they don’t have the same closure properties. Unfortunately not all publications on this subject follow the standardization of this nomenclature.

-

Book I of Euclid’s Elements formalized in Lean4 (\(\mathbb{M}\)), using System E by Avigad, Dean, and Mumma, intended to formalize rather than replace the diagrammatic reasoning frequent in Euclid.

-

Bruno Postle on Piranesi’s fake perspectives and how to model them mathematically (\(\mathbb{M}\)). For instance, a perspective view of a sequence of repeated arches should show them in different shapes (you can see farther into the arches that you have a more direct view of) but Piranesi drew them as scaled copies of the same arch. Postle suggests that this gives more pleasing perspective drawings than true perspective for other similar subjects, such as rows of buildings along a street.

-

An asymptotic expansion (\(\mathbb{M}\)) is a mathematical series that diverges, but that still provides a useful approximation to something if you truncate it early enough. Maybe the most famous is the Stirling series, the infinite power series approximation to the factorial that when truncated gives Stirling’s approximation. The full series is divergent, for any fixed value of the factorial, but it takes a lot of terms to diverge and gets very accurate before it does.

I’m convinced there are non-mathematical situations that have analogous behavior. One I frequently encounter involves the bots set loose on Wikipedia to clean up the citations to the literature in the references of Wikipedia articles. Usually, a bot-cleaned citation is better than a hand-written citation, so it is useful to have a bot clean the citations. Once or twice. But the bots tend to run over the same citations many times, occasionally introducing minor errors, like adding a doi that turns out to point to something related to the citation rather than the citation itself. After that point, repeated passes of the bot can amplify the mistake in the citation until it eventually becomes so garbled that one cannot tell what it was originally intended to refer to.

Is there a name for this real-world phenomenon?

-

Bookends (\(\mathbb{M}\)):

-

Mark Wilson solicits surveys on the state of mathematics on the internet for Notices of the AMS.

-

Scott Aaronson on “the Zombie Misconception of Theoretical Computer Science” (\(\mathbb{M}\)): the mistaken idea that concepts like \(\mathsf{NP}\)-completeness and uncomputability (which apply to functions or infinite sequences) can instead be applied to individual values and individual yes-or-no questions.

-

Journal publisher caught adding falsified citations (\(\mathbb{M}\), arXiv), citations that do not appear in their actual published papers, through the metadata they supply to bibliographic databases.

-

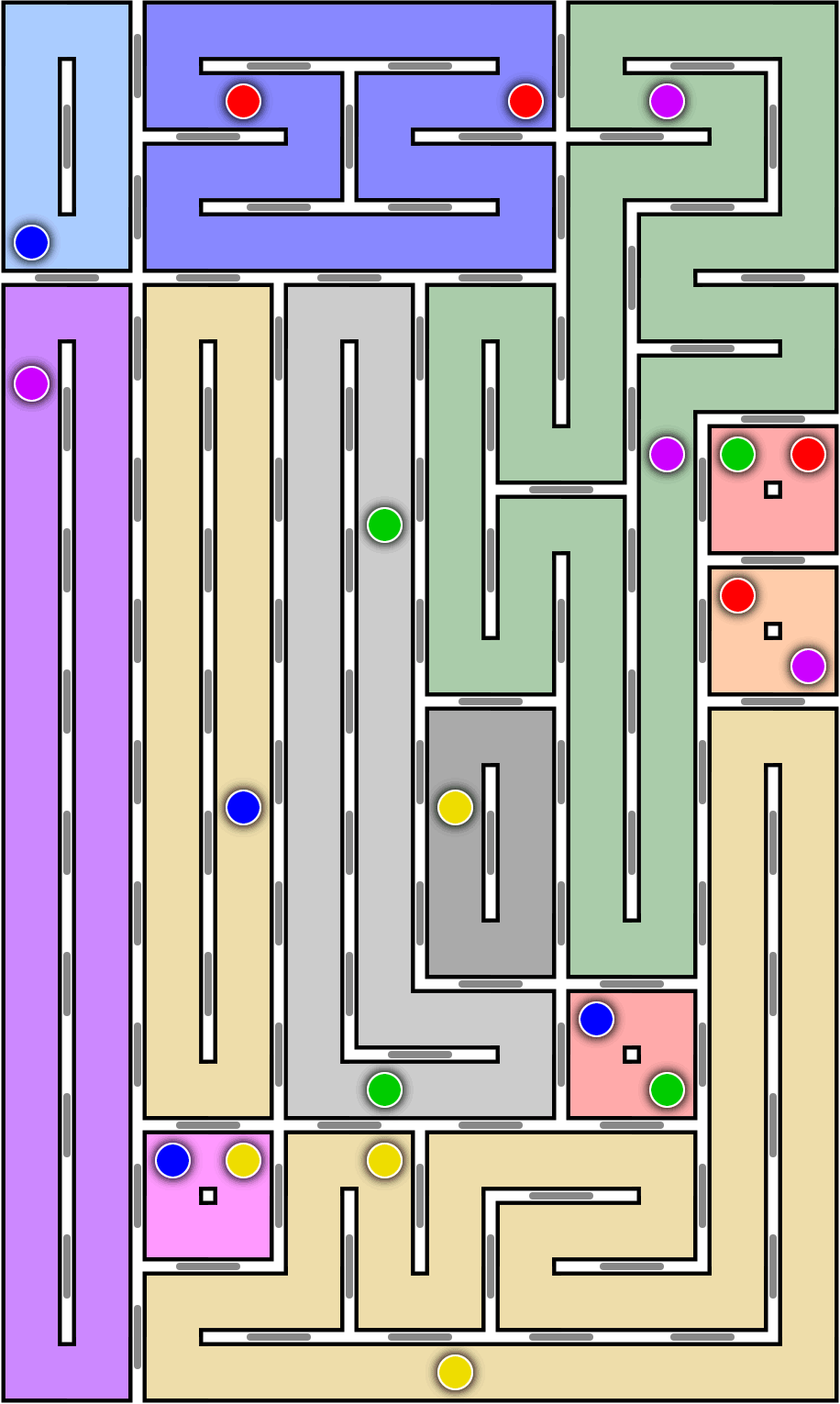

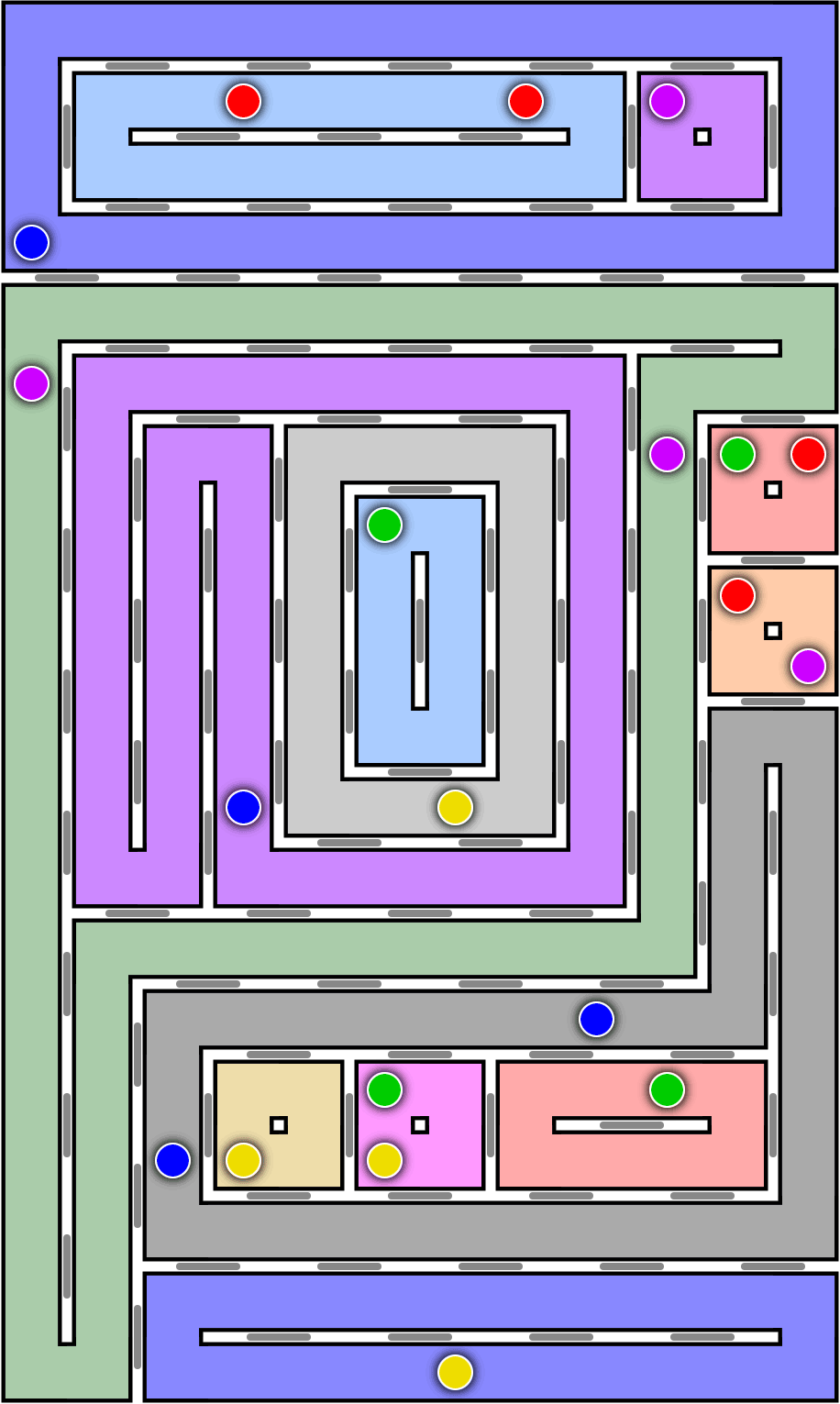

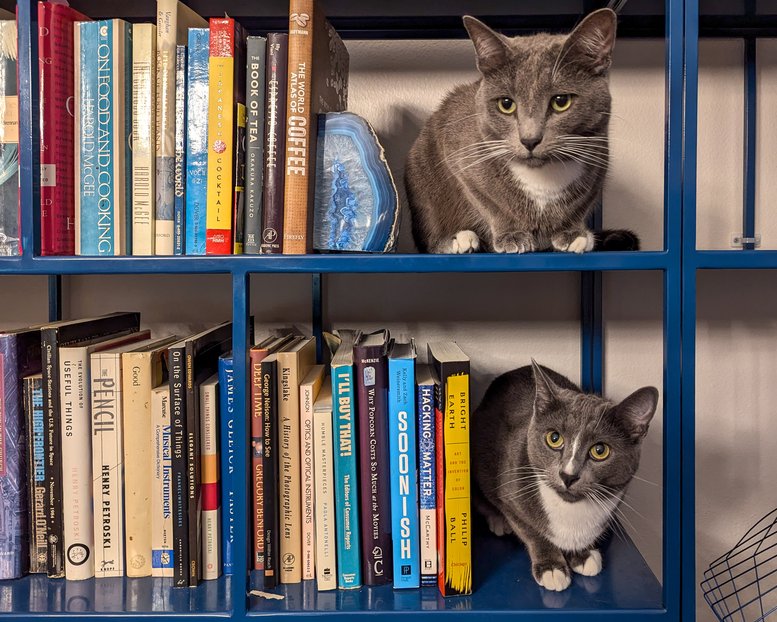

Dave Richeson’s first-round “big internet math-off” entry (\(\mathbb{M}\)) involved 3d-printing a demo of grid bracing, in which graph theory shows up unexpectedly in the problem of designing bookshelves so they don’t fall down and you don’t have to special-order the little shelf clip things and fuss around with wood putty to put them together again almost like new.

-

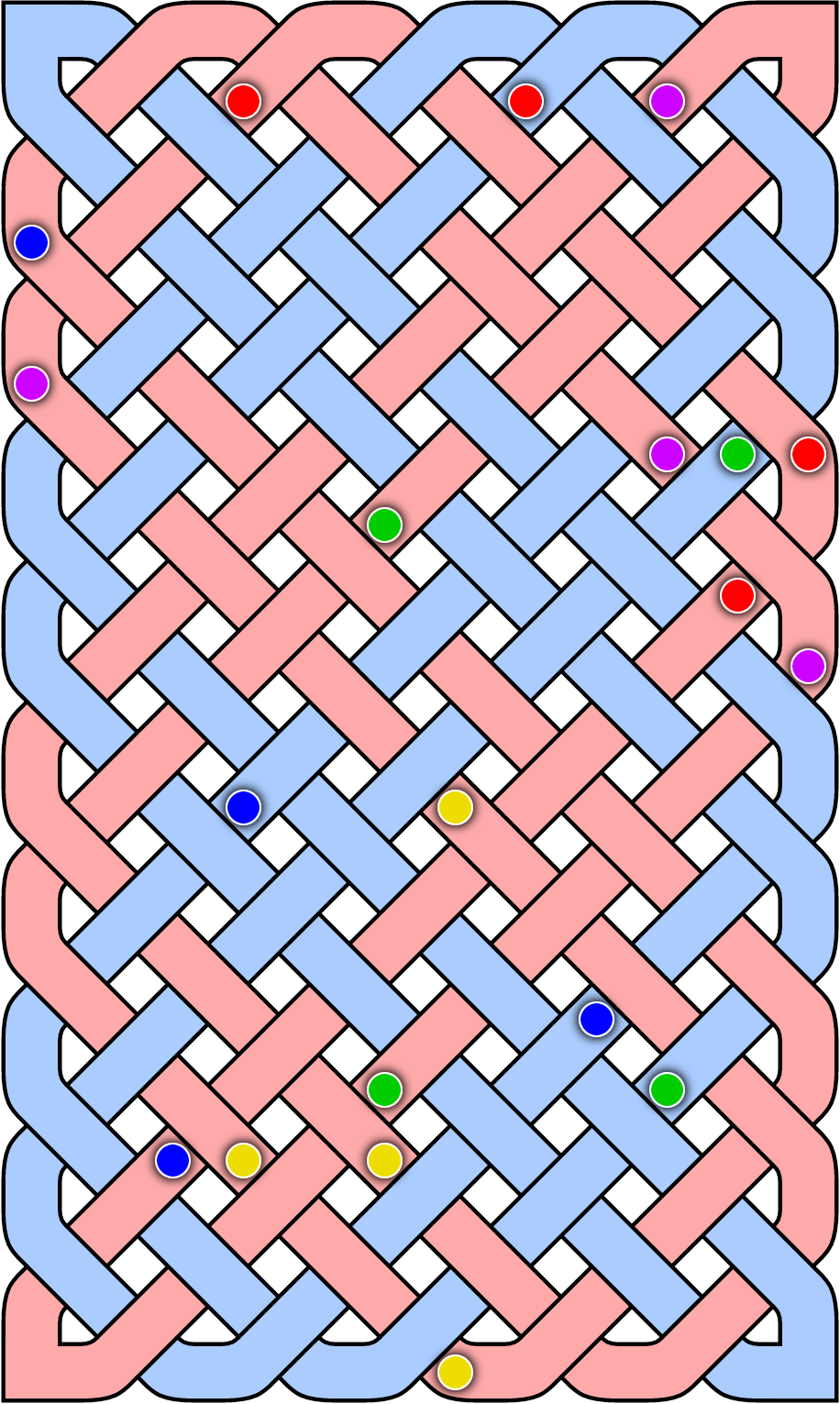

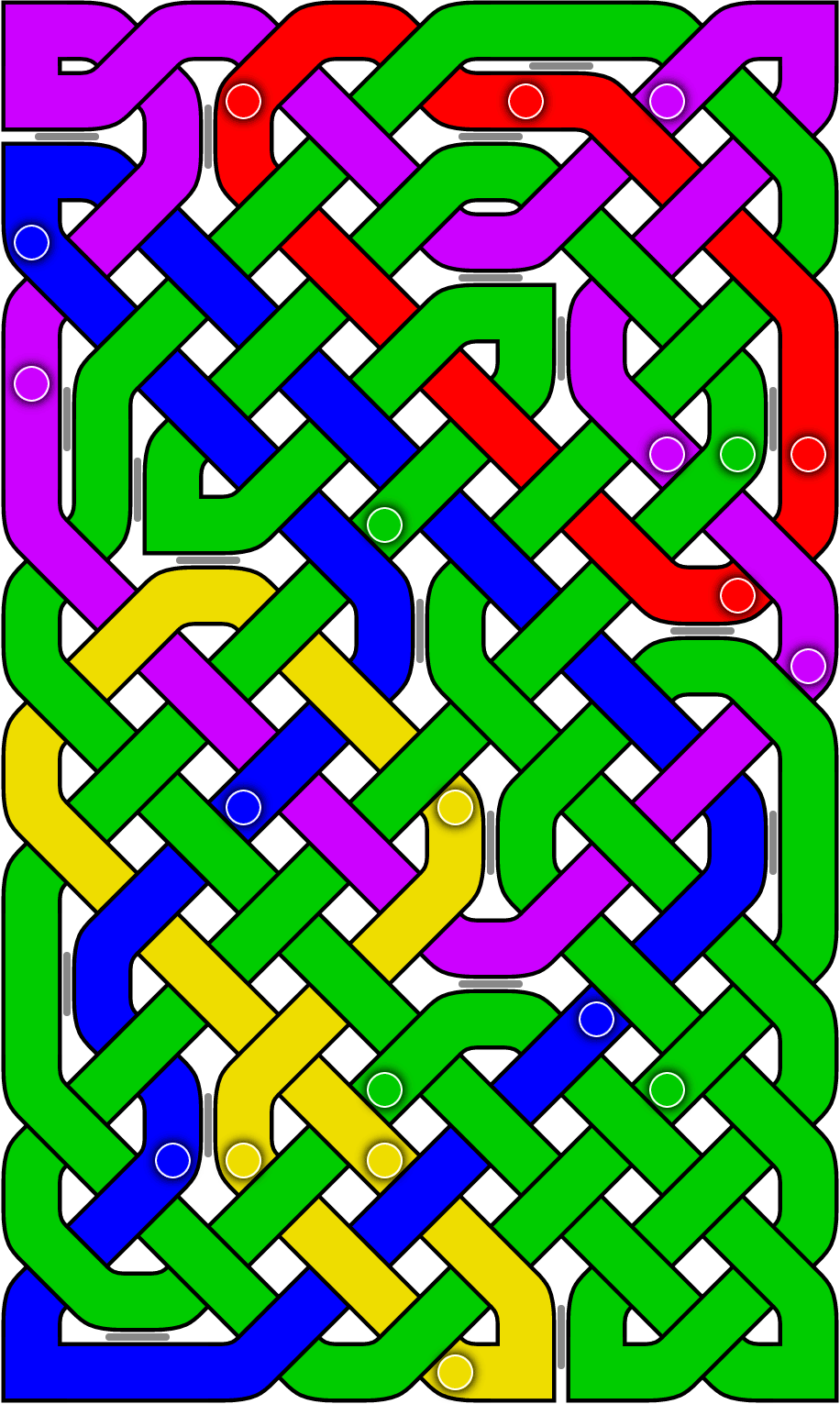

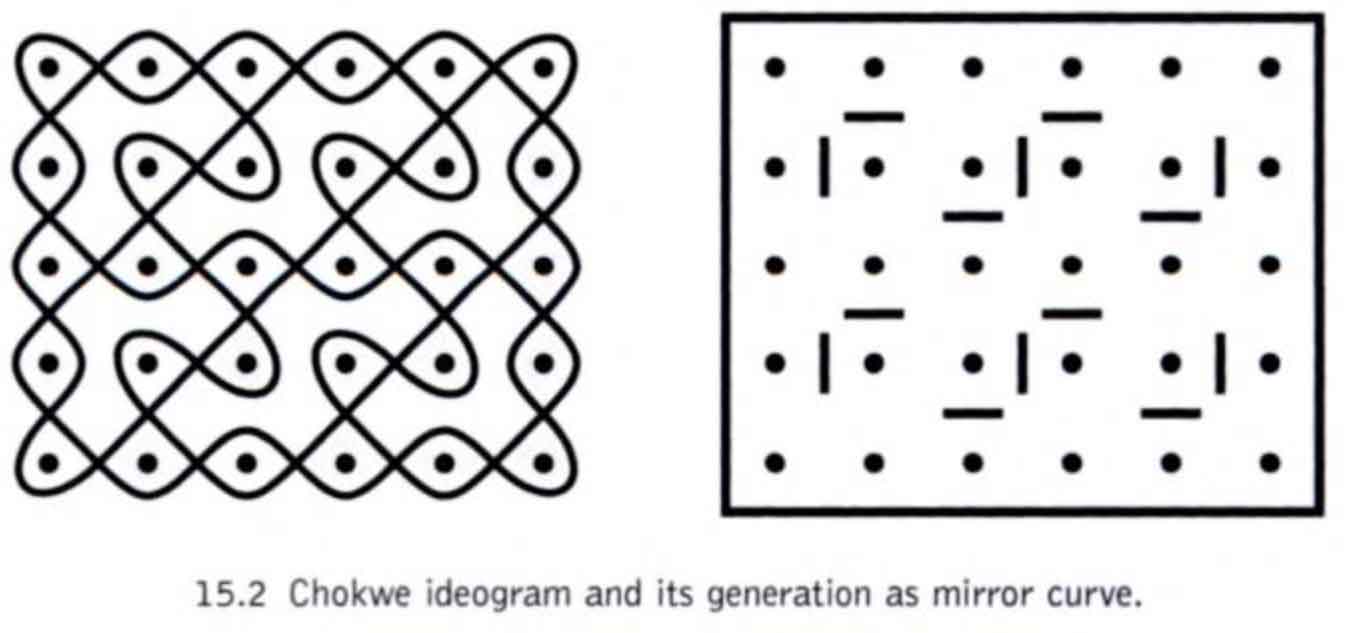

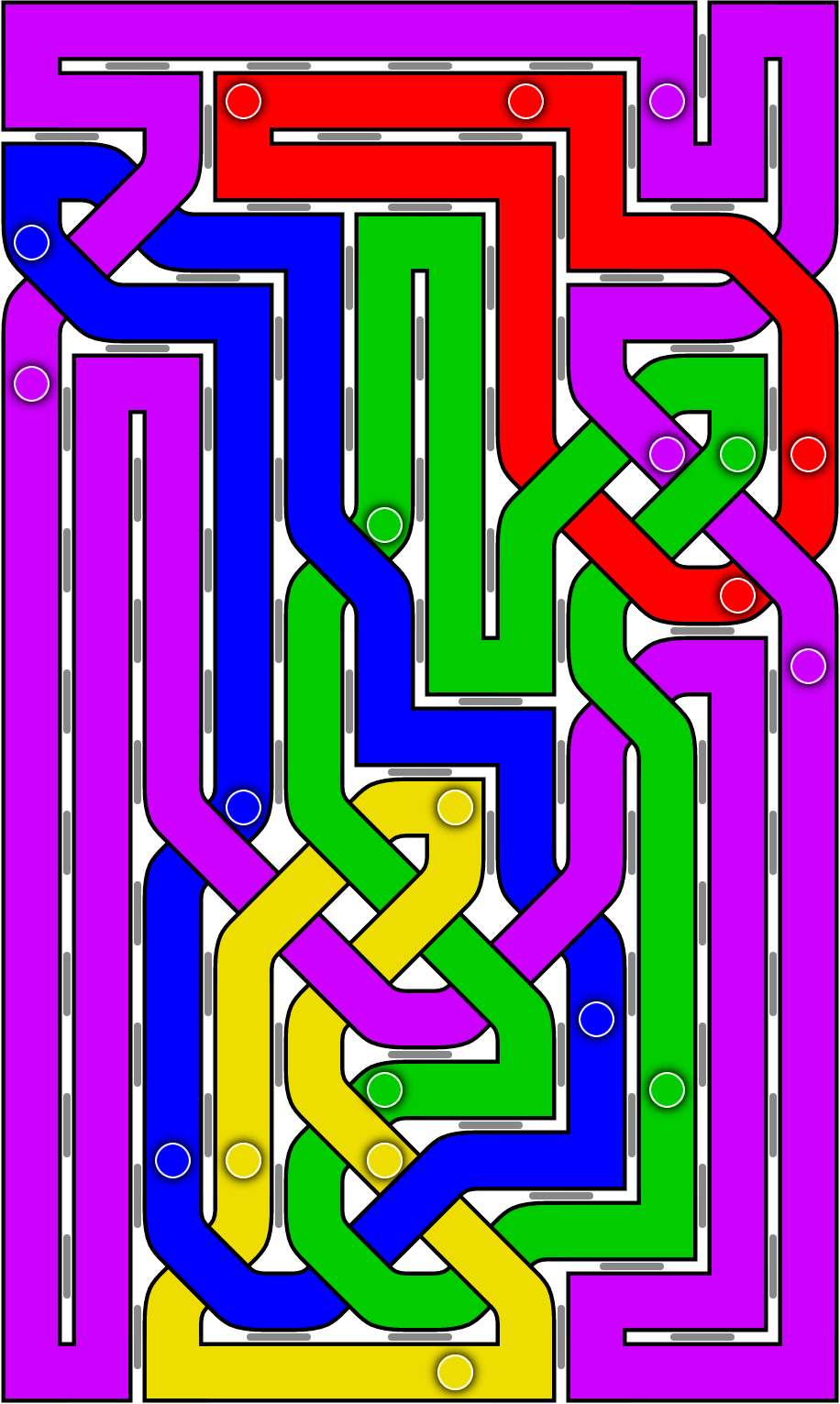

Bathsheba Grossman is kick-starting a project to make large-format bronze-infused resin versions of her mathematical sculptures. See also Bjarne Jesperson’s Pocket Art, similar interlocked symmetric tangles but carved painstakingly out of wood (\(\mathbb{M}\)). I’m very fond of my smaller Grossman piece, the same shape as the ones shown in her post but 3d-printed lost-wax bronze, but I think she did the right thing in switching to media that involve less hard work hand-finishing them. It’s the creativity in design not the hand work that makes her art interesting to me.

-

Quanta on the calculation of the fifth busy beaver number (\(\mathbb{M}\), see also).

-

Rollercoaster loop shapes (\(\mathbb{M}\)), Ann-Marie Pendrill (2005). A recent post on Boing Boing led me down a rabbit hole of earlier and earlier posts and explanatory videos seemingly converging here. The basic idea is to even out the forces resulting in the characteristic teardrop shape of roller-coaster vertical loops. Pendrill discusses whether centripetal or total acceleration should be balanced, the question of sudden-onset increased acceleration versus a gradual ramp-up, the different shapes that result from these choices, approximations involving replacing parts of the loop by circular arcs, and some ideas on how to tell the difference.